General Analysis, a San Francisco-based startup that has now raised $10 million in seed funding to address what it sees as a growing gap in enterprise security.

The round was led by Altos Ventures, with participation from 645 Ventures, Menlo Ventures, Y Combinator, and a group of early investors.

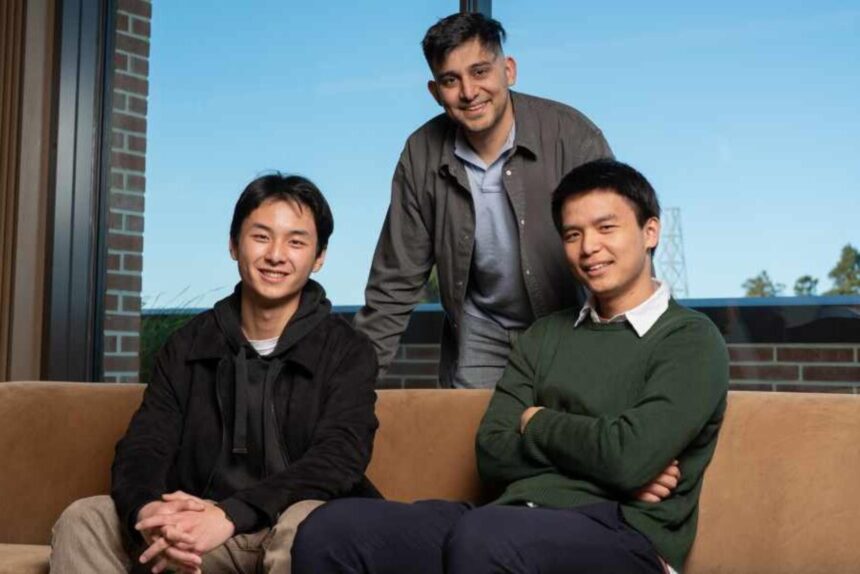

Founded by Rez Havaei, alongside Maximilian Li and Rex Liu, General Analysis is focused on a core challenge that AI agents do not behave like traditional software, and cannot be secured using conventional methods.

“Our position is that security for AI systems is an empirical problem. It has to be grounded in rigorous measurement of how those systems behave under realistic and adversarial conditions. You cannot prove an agent is safe,” said Maximilian Li. “You can only measure how often it fails, and how badly, and drive both numbers down.”

What You Need to Know

As companies increasingly deploy these systems across customer support, financial services, and internal operations, the stakes are rising. Unlike static software, AI agents continuously interpret inputs, generate outputs, and take actions that can vary significantly depending on context.

This dynamic behaviour makes vulnerabilities harder to detect in advance and failures more difficult to predict.

Traditional security approaches rely on systems that engineers can audit, reading code, tracing execution paths, and anticipating outcomes.

Agentic systems, by contrast, operate with a degree of autonomy that limits that level of control.

When AI Systems Fail, They Fail Differently

General Analysis’ research highlights how these risks are already emerging in real-world deployments.

In earlier work, the team demonstrated how a widely used integration in Cursor could be manipulated through a single malicious support request. The exploit allowed an internal AI agent to expose an entire private database, underscoring how easily systems can be compromised when they interact with untrusted inputs.

The vulnerability drew attention from industry observers, including Simon Willison, who described it as a “lethal trifecta”, a system that holds sensitive data, processes external input, and has the ability to communicate outward.

For security teams, the challenge lies in balancing control with usability. Restrict systems too heavily, and they lose effectiveness. Leave them too open, and the risks become difficult to quantify.

Measuring Risk Instead of Assuming Safety

General Analysis is taking a different approach to the problem, arguing that AI security cannot be guaranteed through static rules or design assumptions.

Instead, the company treats security as an empirical challenge, one that must be tested continuously under realistic and adversarial conditions.

“We hear from security teams that they want agents that are secure by design,” said Havaei. “What that often turns into in practice is a stack of isolation layers and ad hoc context restrictions that makes a system feel more controlled. Those measures either fail to eliminate the underlying vulnerability or constrain the agent enough to limit its usefulness. The problem is that feeling safer and being safer are not the same thing.”

Adversarial Testing as a Security Standard

The company’s model centres on running adversarial simulations against live systems, identifying failure points, and helping organisations determine which safeguards meaningfully reduce risk without undermining performance.

This approach reflects a broader shift in how security is being understood in the AI era, away from static defences and towards continuous testing and adaptation.

Rex Liu, co-founder of General Analysis, suggests that the transition to AI-driven workflows may ultimately improve security, despite the near-term risks.

“Many of those workflows were never especially secure to begin with, and their failures are often hard to observe or improve rigorously. But as those workflows become agentic, they also become more measurable and more improvable — which creates a path for businesses to become more secure in practice than they were before,” he said.

Investors Back a New Security Paradigm

Investors in the company see the rise of AI agents as a fundamental shift that requires a corresponding evolution in security practices.

General Analysis is already working with enterprise clients whose systems serve hundreds of millions of users, highlighting the scale at which these challenges are unfolding.

As AI agents take on more responsibility within organisations, the central question is beginning to change.

For General Analysis, the opportunity lies in helping organisations answer that question with data rather than assumptions.

Talking Points

It is significant that General Analysis is approaching AI agent security through adversarial testing rather than traditional static defence mechanisms, reflecting the evolving nature of modern enterprise systems.

This approach acknowledges a critical shift in software architecture, where AI agents no longer behave as predictable systems but as adaptive entities capable of dynamic decision-making under changing conditions.

At Techparley, we see this as an important signal that enterprise security is entering a new phase, one where continuous testing and real-world simulation will matter more than pre-deployment assurance.

However, there is still a delicate balance to be maintained. Over-securing agentic systems risks reducing their usefulness, while under-securing them exposes enterprises to unpredictable and potentially costly failures.

Adoption will therefore depend on how effectively organisations can integrate continuous adversarial testing into existing security workflows without slowing down deployment or limiting system performance.

As AI agents become more deeply embedded in enterprise operations, we see an opportunity for security models like this to become a foundational layer of trust, ensuring that innovation in automation does not come at the expense of operational safety and control.

——————-

Bookmark Techparley.com for the most insightful technology news from the African continent.

Follow us on Twitter @Techparleynews, on Facebook at Techparley Africa, on LinkedIn at Techparley Africa, or on Instagram at Techparleynews.